# find the indexes of all matched faces then initialize a

Name = "Unknown" #if face is not recognized, then print Unknown # attempt to match each face in the input image to our known

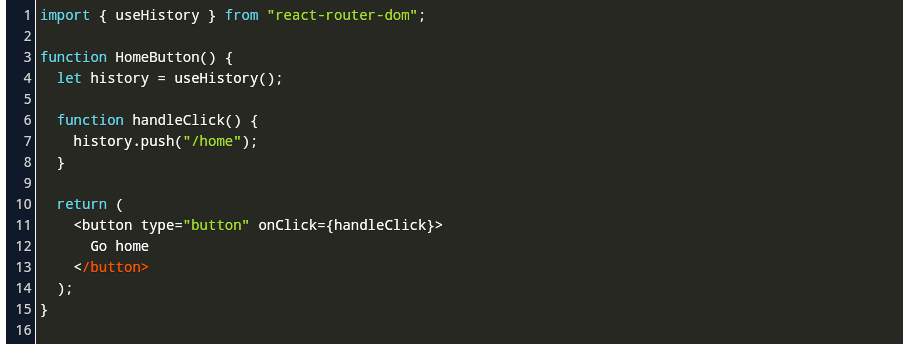

# compute the facial embeddings for each face bounding boxĮncodings = face_recognition.face_encodings(rgb, boxes) # but we need them in (top, right, bottom, left) order, so weīoxes = # OpenCV returns bounding box coordinates in (x, y, w, h) order Rects = tectMultiScale(gray, scaleFactor=1.1, Rgb = cv2.cvtColor(frame, cv2.COLOR_BGR2RGB) Gray = cv2.cvtColor(frame, cv2.COLOR_BGR2GRAY) # detection) and (2) from BGR to RGB (for face recognition) # convert the input frame from (1) BGR to grayscale (for face # grab the frame from the threaded video stream and resize it # loop over frames from the video file stream #vs = VideoStream(usePiCamera=True).start() # initialize the video stream and allow the camera sensor to warm up Print(" loading encodings + face detector.")ĭata = pickle.loads(open(encodingsP, "rb").read())ĭetector = cv2.CascadeClassifier(cascade) # load the known faces and embeddings along with OpenCV's Haar #Determine faces from encodings.pickle file model created from train_model.pyĬascade = "haarcascade_frontalface_default.xml" #Initialize 'currentname' to trigger only when a new person is identified.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed